Samsung made an exciting announcement at the Hot Chips 2023 conference, revealing their latest chip innovations that combine memory and processor chips. These chips, known as high bandwidth memory-processing in memory (HBM-PIM) and LPDDR-PIM, have been specifically developed with future AI applications in mind.

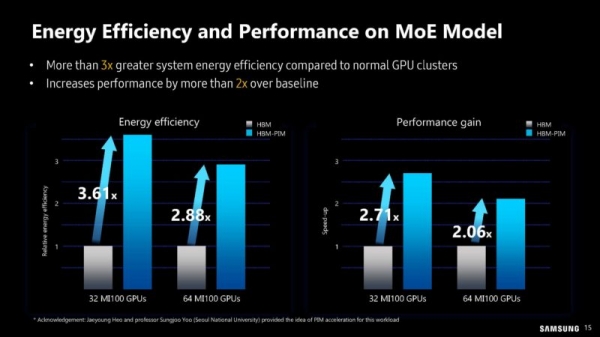

According to Samsung, utilizing these new chips for generative AI applications will deliver twice the accelerator performance and power efficiency when compared to conventional HBM chips. To demonstrate their effectiveness, Samsung conducted a study using AMD's MI-100 GPU and built an HBM-PIM cluster for mixture of experts (MOE) verification. By employing 96 units of MI-100 with HBM-PIM, the MOE model showcased a twofold acceleration rate compared to standard HBM chips and boasted three times the power efficiency.

One of the key features of HBM-PIM is its ability to allocate some of the CPU's processing capabilities to the memory. This allocation reduces the need for extensive data transfer between the CPU and memory. As AI applications typically rely on vast amounts of data, this chip concept has been designed to help address potential memory bottlenecks.

In addition to HBM-PIM, Samsung also presented another innovative chip called LPDDR-PIM, which applies PIM technology to mobile DRAM. This enables calculations to be performed directly on the device itself. Samsung highlighted that the LPDDR-PIM chip offers a bandwidth of 102.4GB and consumes 72% less power compared to conventional DRAMs.

These advancements from Samsung in the field of chip technology represent significant steps towards enhancing AI processing capabilities, improving power efficiency, and addressing memory performance challenges.